Growing up as a gamer in the age of the internet, you get to hear about a lot about games that came out years ago. The common consensus among people that grew up in the 80s/90s is that games were so much better then. According to them, while we still get good games today, the types of games we go back then had more thought and care put into them.

Is this actually true? Well there’s points that could be made for both sides, but I do think there is some slight bias going on with these types of people.

On the Side of Games Being Better in the Past

You see this type of argument not with games, but with pretty much anything. Movies, Books, Music, really any medium is apparently better back in its early days.

And there’s some truth to this, especially in film. We’re in an era of sequels, reboots and remakes that are simply made to make money, and many are pretty bad because of that. That right there is exactly the problem.

The Way People Think About Making Products Has Changed

Back when film was first invented, it most likely wasn’t viewed as a lucrative art, but more of a novelty. So the people who made the most money were those that tried to tell the best stories as possible. I mean, films were in black and white, if you wanted to show something on your film you had to really think hard about how to show it. Now we have CGI and we can show anything we want, not much skill is involved on the director’s side.

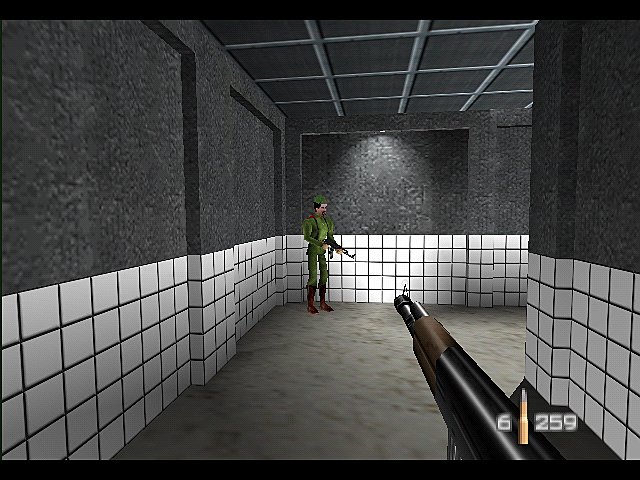

This is the same for Video Games. Back in the 80s, we couldn’t just make a 3D explorable world with plenty of things to do. Games were in 2D and it was pretty hard to show anything due to the limitations on graphics. Now we can slap a first-person shooter formula onto a large 3D map and bam! The latest Far Cry game is out.

We Can Make So Much Money Through Video Games by Barely Trying that There’s no Point in Trying at All

This, right here, is the discovery that large companies like Ubisoft and EA made that creates all of the negative reception today. It’s why indie developers, who have to try much harder to be seen by the population, are our source of good design nowadays.

Many of the games with large budgets that get heavily promoted, have very similar mechanics between each other. Usually there’s a basic RPG leveling up mechanic, stealth, shooter mechanics and an open world. None of these are particularly bad, but there’s little variety.

If you read an interview from game developers in the 90s, they’ll say things like “With this game, we tried to do this”, or “we wanted to achieve the feeling of this”. If you read an interview with developers today, even indies, they’ll say something like “we tried to recapture the feeling of this”. There’s a focus on doing what was once done well.

It’s not bad to try to recreate the best of the past, but we see it way too often.

Instead of looking forwards, we’re looking back

Games that try to invent new things, or just games that have some real thought put into them, still exist, but they’re too far and few between. But then again, there were a lot of bad games back in the 80s.

On the Side of Games Being Better Now

The Angry Video Game Nerd is a pretty funny show. It’s fun to look at the variety games from the past that were just plain terrible, and watching a comical critique of them is entertaining to say the least.

It’s interesting to me that people can watch and laugh at shows like this, before turning around later and unanimously agreeing that ‘games were so much better back then.’ Sure, there are a ton of bad games now, but there were a ton back then as well.

Not only that, but there were definitely companies back then that made games solely for money, it’s nothing new. The amount of rip-offs, clones, lazy movie tie-ins and buggy-to-the-point-of-impossible-to-play games made for consoles in the 80s and 90s is massive. So why do we ignore these games?

Confirmation Bias

Confirmation bias is the idea that no matter how much evidence a person sees, their opinion on the subject will always been reinforced. Their opinion or theory is constantly being confirmed, not matter what — it’s almost like they only see what they want to see.

Basically, we remember the really great games from the past, because those had a massive impact on us. We also remember the failures from the present, because of hype culture and the focus on sequels. Thus, we form the opinion that ‘Games were better when I was a kid’, and then ignore any bad games from back then, and any good games now.

Confirmation bias is a very human habit. It’s not really something that needs to be fixed, but something that needs to be considered when forming opinions.

In reality the number of bad games per year has remained relatively stable. However, the argument still remains that the good games of this generation are still not quite as good as those that came before. Is it true that we just don’t get the same quality of games as before?

Well, no…

To be honest, with the fear of sounding obnoxious, I’m not sure if this is even debatable.

Technology has improved so much that if you compare a game from 1980 to a game that came out recently, the older game just doesn’t hold up. How could you compare a 2D game on the NES that looks like it’s made out of lego with gameplay as stiff as concrete, to some of the most refined, artistic games that have come out recently?

I understand that I probably sound like some kind of deluded, uninformed casual gamer who only cares about specs, but think about it. We have games that replicate the type of gameplay that retro games provided, but thanks to technological leaps and improvements in the way we design games, there’s no that they could be worse.

Most games today are designed by fans of video games, back in the 80s every single developer was just a programmer who’d probably just heard of video games. Developers today understand more of what makes a game fun, making the mistakes developers made back then incredibly visible.

Yes, there are games that stand the test of time, however I’d say most of these games were in the SNES era, and they’re still far and few between. During the NES/Mega Drive and the N64/PS1 eras, we were still figuring out gameplay in 2D and 3D respectively, so a lot of those genres have been perfected more recently.

There’s definitely exceptions to the rule, Castlevania Symphony of the Night had a number of mechanics, such as familiars and hidden moves, that I’ve never seen in a metroidvania title since (something that really bugs me). But if you’ve never played a game that people praise as one of ‘the great games from back then,’ there’s a good chance you won’t find it as great as they do.

-1474102731.jpg)

I probably need to stress that not every game that comes out today is better than in the 80’s. There are some terrible games that have come out within the last year. What I’m arguing is that the best games of today are better than the best games of back then.

Here’s something I want to ask you, if a terrible game from recent times had come out earlier, how would it have been remembered? If we took a mess of a game, like Ride to Hell: Retribution, and released it back when people were still playing Pacman, there’s a very good chance that it would have gone down as one of the best games of all time.

And we’re starting to wake up to this fact as well. There’s plenty of really good analyses about legendary games that go into the problems with them, such as Arin Hanson’s amazing analysis on Ocarina of Time.

But this asks another question, why did we think those games were great in the first place? What made us believe that those games were better than whatever came out afterwards?

We Were Children

I’m actually quite jealous of children, and I miss being one. When you’re a child, anything can be interesting. Well not everything, I do remember being bored a lot as a child, but when it came to video games, it was a lot easier to find them interesting.

There are two reasons for this, the first is imagination. It’s a know fact that children have a stronger imagination than adults, they’re able to pretend and make games for themselves. Video games, even those made with limited technology, are enough to fill in the gap between reality and imagination. A child is able to play a game where they fly, and believe that they themselves are flying. To put it simply, children get immersed in a game so much easier.

Adults can’t do this nearly as well, hence why as the average age of gamers gets older and older, there’s a common focus on graphics. Better graphics makes games look more real, and fills the gaps that the lack of imagination leaves behind.

The second reason is that there is a limited supply of games when you’re a child. Video games are usually supplied by your parents, and the average parent probably won’t buy their children new games frequently, usually only for birthdays and Christmas.

The thing is, when you get a new game you had to like it. It could be months before you get another game so you needed to find enjoyment in it otherwise you’d have no games to play.

Nostalgia

We, as gamers, have so much nostalgia for games that we played when we were young, and that’s not a bad thing. Nostalgia is something that I’m incredibly thankful for, it allows you to relive the past. The word shouldn’t always be used negatively to explain why people like something that you don’t.

The issue is when we allow nostalgia to affect our opinions. One of the worst things about the gaming community is the idea of ‘these games that I played when I was a kid are fantastic, but the games you liked when you were a kid were terrible.’ Being born in the late 90s, I read magazines and saw people online telling me that some of my favourite games back then were actually really bad. The worst feeling was playing their favourite games and realising that some of them were just as bad.

Nostalgia could be the reason why people think past games were better, but there’s something else that’s bigger than that, and it questions how we critique games.

Leaps and Bounds

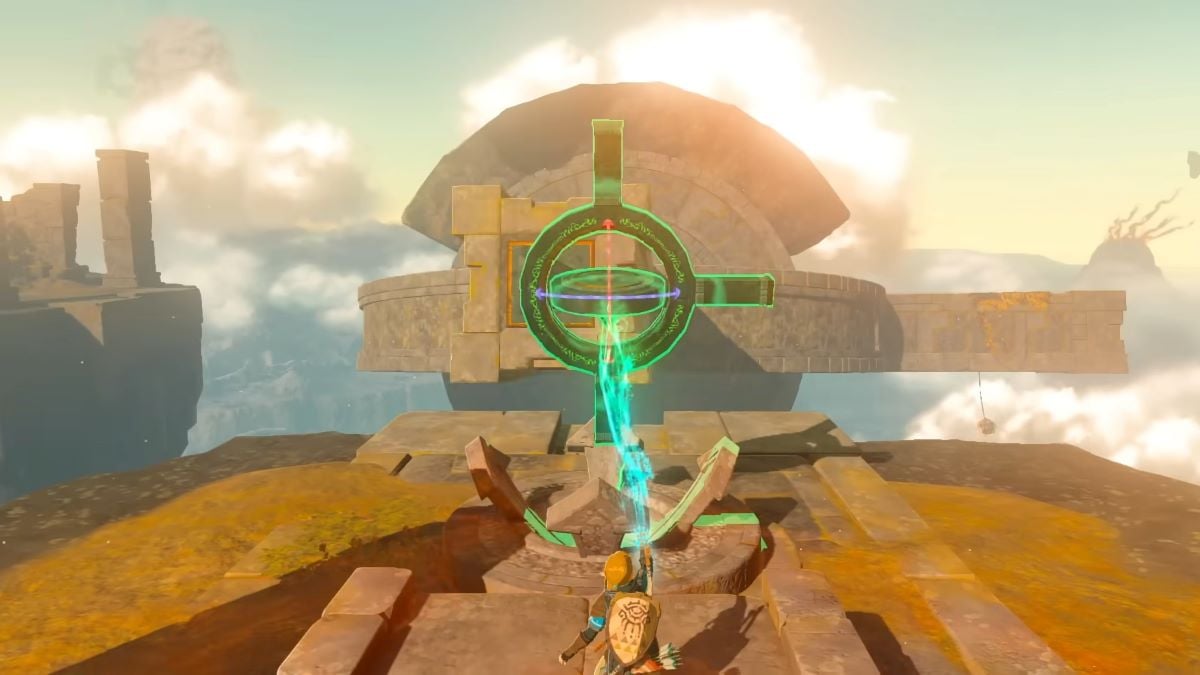

Think of a popular series of games that has been running for a long time, from the late 80s to the present day. Got one? Now think of what is generally considered the best in that series.

It’s highly likely that the game you just thought of was made in the late 90s. Super Mario 64, Ocarina of Time, Final Fantasy VII and Sonic Adventure have been considered as ‘The Best in the Series’ for the majority of that series’ run. While the majority have either lost their title or are constantly argued about in recent times, there’s no doubt that for a long while after their release people did talk about them a lot.

What do they all have in common? They were all the first in the series to be in 3D.

While whether or not our world has 3 dimensions goes into scientific theory I’m not willing to explain, it is fact that humans perceive the world in 3 dimensions. Thus, games making the leap to 3D was huge. It made games so much more immersive because they now look and act much more like the real world — the jump to HD wasn’t nearly as big.

We have games now that have further improved on what those first 3D games did, but we remember those originals much more. Why is that?

The Brain Works with Comparison

A century ago, we didn’t have television. A millennia ago, we didn’t have the majority of things that make up our daily lives.

To someone living in this day and age, it seems that life would be almost unlivable without the things that we have now. But there was a time when we didn’t. There was a time when we didn’t have air conditioning, security, or medicine that allowed us to live past the age of thirty — humans didn’t even have language itself, just some grunts.

Now this is pretty obvious, but the point is that humans still made it through their lives. This is because by comparison, the lack of these things didn’t matter to them. It probably wasn’t very clean thousands of years ago, but at least an opposing kingdom wasn’t waging war against them. In the current era, if something isn’t clean, it gets to us so much that we have to take action and use some kind of product. By comparison, there isn’t that much in our daily lives that’s worse.

Humans, and pretty much all animals, are able to feel happy in a situation simply because it’s better than what they usually experience. Hence why when video games moved from 2D to 3D, it was very impressive at the time, but not so much any more.

You already know this, so why is this important?

When a game, or anything really, comes out that’s new, different or innovative in some way, it’s impressive when compared to everything else. When another game like that comes out, it isn’t as impressive. It’s already been done. We compare it to what already exists, we see something like it that has already occurred, and it isn’t as interesting.

Humans focus more on the leaps in innovation than the quality itself

We remember being wowed by Ocarina of Time, our minds blown due to the new immersive world. We weren’t as wowed by its direct follow-up Majora’s Mask. It didn’t blow our minds in the same way. It couldn’t. It was only about a decade later that people started to come out saying that Majora’s Mask was actually an improvement on what Ocarina had established.

It’s these leaps that are much more memorable, and had a larger impact on those that played them at the time. All that players cared about was that the game was so much more immersive, the gameplay didn’t really matter.

This goes back to what I said before about releasing a terrible game from today back in the 80’s. If Ride to Hell: Retribution had been released decades ago, the 3D graphics would have made a massive impact on the player. While the game’s graphics were awful when compared to games that came out the same year, the graphics alone would have been so impressive to players in the 80’s that it would stick in their minds more than any other game of the time.

I think this point alone asks so many interesting questions about the way we compare games to others from history.

Video games are about an experience, they’re all about having fun. So there’s no problem when gamers have more fun with a game simply because it’s an innovation. However, in the age of the internet, where we discuss games as an art, we do need to take careful consideration about why we think certain games are great.

When it Comes Down to it

Just because when you played a game you thought it was fun doesn’t mean that it’s a masterpiece of design. Many of the games that we hail as classics were fun when the people who decided they were classics played them. They definitely made major leaps and are impressive on a pioneer level, however they do still contain flaws in their design.

We treat ‘classics’ as lessons in game design, we don’t seem to realise that the reason we liked them so much was because they lead the charge. We need to think of these games more as innovators rather than teachers.

But it’s All Human Nature

These things aren’t a problem that certain people have that we need to fix. We don’t need to go around telling people that they’re wrong and they need to change the way they think. It’s human nature.

Confirmation bias, the way we think of video games as children, and enjoying leaps more than quality, it’s all very human. Everyone does it. All that I’m saying, is that we need to question the way that we regard ‘classic’ media.

Do we Need to Change the Way We Think About Video Games?

It’s hard to say, to remove these biases we would have to change our very nature. I would, however, make the statement that critics who support their opinions with knowledge of good game design are more reliable than those who simply state that certain aspects of the game were ‘fun’, since ‘fun’ is very much up to tastes.

The thing that I’m truly worried about, is that it’s possible that certain games don’t get the attention they deserve simply because we are comparing them to ‘classic games.’ We are constantly comparing current games to ‘classics’ such as Ocarina of Time, wondering why games can never be as good as those. But in reality, all these biases are in play, and what we think are these ‘fantastic masterpieces’ are really delusions that never existed.

It’s possible that as video games as a medium gets older and older, the way we critique video games gets more and more unfair.

Published: Oct 3, 2016 07:48 pm